Overview

In this activity, you will practice with image transformations, including geometric transformations. You will also examine several image filters, which create feature maps by applying some function to small regions of the original image. You will also learn several techniques for extracting interesting features and motion from images, and an algorithm that tracks an object by its color.

The Github repository for this assignment will contain starter code files. Add your code to the suitable file, as directed by the TODO comments.

Geometric Transformations

Resizing images

The most basic transformation is resizing an image. The resize function will scale a picture up or down, and can also be used to stretch an image. You must give the resize function a source image and an input for the dimensions of the new picture. If you want to scale images by multiplying the image dimensions by a factor, you can do that, by putting in a “nonsense” size of (0, 0) and then specifying the fx or fy optional inputs.

The matchSize function below takes in two images. It resizes the second image to match the dimensions of the first image, and returns the resized image.

Try this: Run the sample calls to this function in geomPractice.py. Add more calls, including some extreme resizing using some of the large and tiny images.

Next is a partial program that you will complete. This program scales an image up and down, displaying it in the same window so that it seems to pulse larger and then smaller. The only piece missing is the actual resizing of the image.

def pulseSize(img):

deltaSize = 0.05

currScale = 1.0

while True:

cv2.imshow("Pulse", img)

x = cv2.waitKey(30)

if x >= 0 and chr(x) == 'q':

break

if (currScale > 3.0) or (currScale <= 0.2):

deltaSize = -deltaSize

currScale += deltaSize- 1

- Each pass of the loop, the scaling factor will change by this amount

- 2

- This is the scaling factor for resizing the image

- 3

- This uses a while loop like we do when displaying video: a similar structure!

- 4

- Just like with video, pressing the q key will end the loop

- 5

- If the scaling factor gets too large, or too small, change the direction it is changing

- 6

- Update the scaling factor here before the next pass of the loop

currScale

In writing this function, I had to be very careful about checking the bounds on currScale. In particular, I had to set the lower bound higher than I wanted to avoid a failure by the resize function.

We defined currScale to be a floating-point value. All floating-point values are approximations of real numbers, and so roundoff error can accumulate, leading to values that are not precise. In geomPractice.py this function has a print statement included that will print out the values of currScale. Try uncommenting that print statement and look at the values it has. Notice how their least significant digits are off from what we would expect!

When working with floating-point numbers, you should always use inequalities to compare values, because the roundoff error makes direct equality difficult. When we do need something like direct equality, we often use an alternate method: compute the difference between the two floating point values we want to be equal, and consider them equal if the difference is below some threshold: abs(currScale - 0.1) <= 0.01 would approximate checking if currScale is equal to 0.1, allowing for an error of up to 0.01.

Try this: We want to add steps to resize the input image, just inside the while loop, and then we want to display the resized image.

- Add a call to

resizejust above thecv2.imshowline inside thewhileloop. Pass it the input image, and set the image dimensions to(0, 0). Set thefxandfyoptional inputs to becurrScale. - Be sure to save the image returned by

resizeinto a new variable - Change the

imshowline to show your new resized image

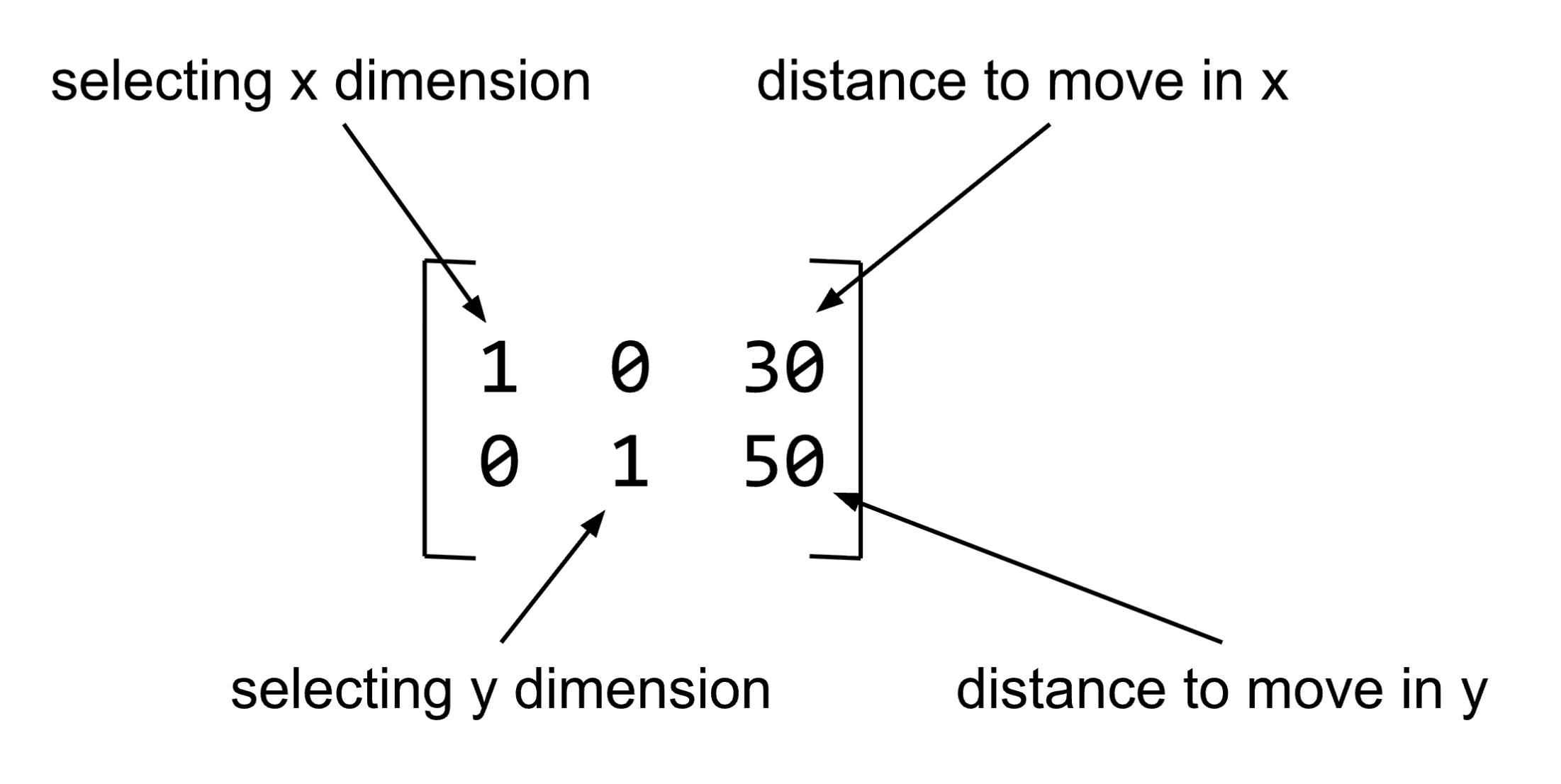

Translation

The example in Figure 1 below illustrates how to create a translation matrix and make a new image with the old image moved to a new location. The first row of the translation matrix selects the column dimension, and moves the colors 30 pixels to the right. The second row of the matrix selects the row dimension, and moves the colors 50 pixels down.

Below is a picture of the data in the matrix, and what each part means.

The script below shows how to create a matrix to perform image translation, using the warpAffine function. A copy of this script is in your geomPractice.py file. Try this script, and try changing the 30 and 50 to different values, including negative numbers.

img = cv2.imread("SampleImages/snowLeo2.jpg")

(rows, cols, dep) = img.shape

transMatrix = np.float32([[1, 0, 30], [0, 1, 50]]) # change 30 and 50

transImag = cv2.warpAffine(img, transMatrix, (cols, rows))

cv2.imshow("Original", img)

cv2.imshow("Translated", transImag)

cv2.waitKey(0)- 1

-

We need the size of the original image, as

warpAffineneeds to know how big a “canvas” to show the result on - 2

-

warpAffineexpects a 2 by 3 Numpy array holding 32-bit floating-point numbers.np.float32is likenp.arraybut it creates an array where the data are 32-bit floats. - 3

-

The

warpAffinefunction takes the original image, the 2x3 matrix, and the size for the new image it creates

Try this: Experiment with this script in your geomPractice.py file, until you understand how to specify the change in x or y positions (only change the 3rd value in each row of the matrix).

CHOOSE ONE OF THE TWO TASKS BELOW TO COMPLETE

Try this: In the geomPractice.py file, make a copy of the videoProcess and processImage functions, and rename them jitterVideo and jitterImage. Then do the steps below: take them one at a time and test each before moving forward.

Modify the jitterImage function to:

- Generate a random integer in the range from -100 to +100 for how far to translate the image in the x direction

- Do the same for the y direction

- Create the 2x3 translation matrix, as shown above, using your two new variables for the translation distances

- Change the call to

image.copy()so that it callswarpAffineinstead, using the translation matrix you just defined

Modify the jitterVideo function to:

- Call

jitterImageinstead ofprocessImage

Test your program: how does it look? You could modify the range for your random offsets to get a nice “jittery” effect, or you could add in a delay where it only generates a new offset very k frames, instead of each frame.

Try this: In the geomPractice.py file, make a copy of the videoProcess and processImage functions, and rename them bounceVideo and bounceImage. Then do the steps below.

Modify the bounceImage function to:

- Take in two extra inputs,

txandty - Create the 2x3 translation matrix, as shown above, using

txandtyfor the translation values in the matrix - Change the call to

image.copy()so that it callswarpAffineinstead, using the translation matrix you just defined - Make the new image size 2 times the width and 2 times the height of the original image size

Modify the bounceVideo function to:

- Set up four variables before the

whileloop:tx,ty,deltaX, anddeltaY. Initializetxandtyto be zero, and setdeltaXanddeltaYto be 3. - Change the call to

processImageto callbounceImageinstead, and passtxandtyto it as well as the frame - At the bottom of the

whileloop, add steps to updatetxandtyby addingdeltaXanddeltaYto them - Add steps to check whether it is time to bounce (this will be similar to the

maskVideoprogram from ICA 11)- If

txis less than or equal to zero, then negatedeltaX - If

tyis less than or equal to zero, then negatedeltaY - If

txplus the image width is greater than or equal to 2 times the image width, then negatedeltaX - If

typlus the image height is greater than or equal to 2 times the image height, then negatedeltaY

- If

Test your program: Does the video feed bounce the way you expected it to?

Rotation

If we want to rotate an image, we can use warpAffine, but we need to create a form of the rotation matix to tell it what to do. Fortunately, OpenCV provides a helper function, getRotationMatrix2D that will do the calcululations for us. It takes three inputs:

- The (x, y) coordinates of the pixel we want the rotation to rotate around. Imagine sticking a pin into the picture at that location and then rotating the image around the pin.

- The angle (in degrees) that we want to rotate the image; positive angles rotate counter-clockwise, negative angles rotate clockwise

- A scaling factor, that scales the image to preserve its aspect ratio: a value of 1 causes no change in size

The script below rotates a picture by different amounts, writing the amount in white in the lower right corner of the new window. Examine the code to understand how the call to getRotationMatrix2D works, in conjunction with warpAffine.

img = cv2.imread("SampleImages/californiaCondor.jpg")

cv2.imshow("Original", img)

(rows, cols, depth) = img.shape

for angle in [30, 45, 60, 90, 120, 135, 150, 180, -45, -90, -180]:

rotMat = cv2.getRotationMatrix2D( (cols / 2, rows / 2), angle, 1)

rotImg = cv2.warpAffine(img, rotMat, (1.5 * cols, 1.5 * rows))

cv2.imshow("Rotated", rotImg)

cv2.waitKey(0)This shows a series of different angles, all with the same center point.

Try this: Try varying the center point, which here is set to be the center of the picture. Maybe try rotating around (100, 100) then (200, 200), then (400, 400), etc. How does the result change?

Try this: In the geomPractice.py file, make a copy of the videoProcess and processImage functions, and rename them spinVideo and spinImage. Then do the steps below.

Modify the spinImage function to:

- Take in one extra inputs, an angle

- Call

getRotationMatrix2Dfor the rotation around the center point, with the input angle (and scaling factor = 1) - Change the call to

image.copy()so that it callswarpAffineinstead, using the rotation matrix returned bygetRotationMatrix2D - Make the new image size either the same size or twice the size, depending on which look you like

Modify the spinVideo function to:

- Set up an

anglevariable before the loop, and set it to 0 initially - Inside the loop, change the call from

processVideotospinVideo, and passangleto it - Add an update step that adds some fixed amount to the

angle(try small values like 1, 2, 5, or larger ones like 10 or 20)

Test your program: How does it work? For which changes to the angle does the result look smooth versus jumpy?

General warping

The end of the reading also showed how to use the helper function getAffineTransform to specify a general warping process.

If you want the challenge, try using general warping to twist or stretch the video feed.

Make another copy of the videoProcess function and its helper, and modify them to do this:

- Choose 3 reference points in the original image

- Initially, set the new points to the same (x, y) locations

- Each new frame, move the new points a small amount, causing the image to warp

- Display the warped image

- At some point, reverse the change in direction for the new points, gradually returning toward the original image

Image Filters

Morphological filters

First we’ll briefly summarize the different morphological filters. They are discussed in much more detail in the readings, so bringing that up while you work on this activity is a good idea.

Dilation and Erosion: Much like blurring, dilation and erosion determine the value for a pixel based on a neighborhood of pixels from the original image. Unlike the default in blurring, we can select the shape of the neighborhood, as well as its size, to be either rectangular, elliptical, or cross shaped. With dilation, the value of each channel of a pixel is the maximum value of that channel in any pixel in its neighborhood. Erosion is the opposite: the value of each channel of a pixel is the minimum value of that channel in any pixel in its neighborhood.

These can be used to emphasize and thicken edges or regions of color in a particular part of an image.

Opening and Closing: Sometimes we want to preserve both dark and light features of an image. Opening and closing combine dilation and erosion. Opening an image means first performing an erosion of the image, then a dilation, using the same size and shape of neighborhood. Closing first dilates the image, then erodes it.

Both these operations are good at removing noise and small details from images.

Top-hat and Black-hat: The Top-Hat filters do the opposite of opening and closing. Instead of removing the fine details, these filters keep just the fine details. The white top-hat takes the difference between the original image and the opening of the image. The black top-hat takes the difference between the closing and the original image.

These filters may be used for feature extraction tasks, or image enhancement.

Morphological Gradient: The morphological gradient takes the difference between the dilation and erosion of an image. This emphasizes the places where there is a change in color, and can be useful to enhance edges.

In the SampleCode folder provided to you is a demo program for how to use the morphological filters: simpleMorph.py. I have copied the code below.

img = cv2.imread("SampleImages/bristleconePine.jpg")

# Filter types: MORPH_DILATE, MORPH_ERODE, MORPH_OPEN, MORPH_CLOSE, MORPH_TOPHAT, MORPH_BLACKHAT, MORPH_GRADIENT

morphType = cv2.MORPH_DILATE

# Filter shapes: MORPH_RECT, MORPH_ELLIPSE, MORPH_CROSS, plus you can define your own

morphShape = cv2.MORPH_RECT

kernelObj = cv2.getStructuringElement(morphShape, (5, 5))

newImg = cv2.morphologyEx(img, morphType, kernelObj)

cv2.imshow("Original", img)

cv2.imshow("Morphed", newImg)

cv2.waitKey()Change the image loaded to any one of your choice. Then experiment with choosing different filter types (the morphType variable), different neighborhood shapes (the morphShape variable), and different neighborhood sizes (defined in the call to getStructuringElemeng).

CHOOSE ONE OF THE FOLLOWING TO COMPLETE:

Try this: Here we can practice with smoothing images to make thresholding work better, or cleaning up the result of thresholding.

In the earlier activity on masks and thresholds, you worked on isolating coins and balls using color and grayscale thresholding. If you didn’t do that, either go back now or skip this activity and go on to the next one. Otherwise, go find your code, and choose the best examples you have of thresholding separating the ball or the coins from the background.

Copy your code to this project, and experiment with modifying it in various ways:

- Apply a morph filter like opening or close (or erosion/dilation) to the original image, before you use thresholding on it.

- Analyze the results: does make the overall program work better, worse, or about the same?

- Try varying the neighborhood shape and size

- Try different morphological filters: do any of them improve your results?

- Apply a morph filter like opening or closing to the threshold image (to clear up some of the noise, fill in partially detected shapes, etc.)

- Analyze the results: does make the overall program work better, worse, or about the same?

- Try varying the neighborhood shape and size

- Try different morphological filters: do any of them improve your results?

Try this: Here we will just practice with using the morphologyEx program in a fun way. The goal is to have the morphing fluctuate over time between small effects (small neighborhood sizes) to large effects, and back again. Start by copying one of the video-reading programs, ideally one with the processImage helper.

- Choose one of the morphological filters described above

- Before the

whileloop, set up a variablekSizeto be used as the neighborhood size - Also set up a variable

deltakto hold the value 2, this is how muchkSizewill change from one frame to the next - In the

processImagefunction (or in the middle of the loop, if you don’t have it), set up a neighborhood “structuring element” object with(kSize, kSize)as its size - Apply

morphologyExto the current frame, using the filter you chose and the structuring element you just created - Display the morphed image instead of the original

- At the end of the

whileloop, add anifstatement that checks if the neighborhood size is too large or too small (too large might be 21 or 25, too small would be 3). If true, do:deltak = -deltak. This will change howkSizeis changing from frame to frame - After the

ifstatements (and still inside the loop), updatekSizeby addingdeltakto it

Blurring an image

Blurring is used to remove noise and variation from an image, making other operations work better. Look at the code samples in the reading to remind yourself how to use blur and GaussianBlur.

CHOOSE ONE OF THE FOLLOWING TO COMPLETE:

Try this: This task is similar to the first task for morphological filters. Here we can practice with smoothing images to make thresholding work better, or cleaning up the result of thresholding.

In the earlier activity on masks and thresholds, you worked on isolating coins and balls using color and grayscale thresholding. If you didn’t do that, skip this activity and go on to the next one. Otherwise, go find your code, and choose the best examples you have of thresholding separating the ball or the coins from the background.

Copy your code to this project, and experiment with modifying it in various ways:

- Apply either the basic blur or Gaussian blur to the original image, before you use thresholding on it.

- Analyze the results: does make the overall program work better, worse, or about the same?

- Try varying the neighborhood size

- Try switching between basic and Gaussian blur: does either work better at improving the results?

- Apply blurring to the threshold image (to clear up some of the noise)

- Analyze the results: does make the overall program work better, worse, or about the same?

- Try varying the neighborhood shape and size

- Try both simple and Gaussian blur: do either of them improve your results?

Try this: Here we will just practice with using blurring on frames from a video. The goal is to have the blurring fluctuate over time between small effects (small neighborhood sizes) to large effects, and back again. Start by copying one of the video-reading programs, ideally one with the processImage helper.

- Choose basic or Gaussian blurring

- Before the

whileloop, set up a variablekSizeto be used as the neighborhood size - Also set up a variable

deltakto hold the value 2, this is how muchkSizewill change from one frame to the next - In the

processImagefunction (or in the middle of the loop, if you don’t have it), set up a neighborhood “structuring element” object with(kSize, kSize)as its size - Apply the blur you’ve chosen to the current frame, using the filter you chose and the structuring element you just created

- Display the blurred image instead of the original

- At the end of the

whileloop, add anifstatement that checks if the neighborhood size is too large or too small (too large might be 21 or 25, too small would be 3). If true, do:deltak = -deltak. This will change howkSizeis changing from frame to frame - After the

ifstatements (and still inside the loop), updatekSizeby addingdeltakto it

Edge Detection

Edges, in computer vision, are just patches where the brightness in an image changes dramatically from one side of the patch to another. Your readings looked at two edge detection methods, the very basic Sobel filter and the more complex Canny algorithm (review that section if you need to).

We will focus on implementing these two filters on a video feed. Looking at the edge detection on a video feed, and being able to change the view, put objects in front of the camera, hold up items with words on them, all these things help to strengthen your intuitions about what edges are, and what these functions can do for you.

Try this: Just like the previous sections, start with a basic video program. Then, either copy the code example for Sobel from the readings (also found in EdgesAndLines.py in SampleCode), or use the stripped-down version here:

Apply this code to the frames of the video. No need to vary anything here, just run the filter steps on the frame and display the result.

Try this: Do the same thing as the previous example, except run the Canny algorithm on the frame instead. Below are some examples of Canny run with different input parameters (notice that Canny can take in a color image).

Recall that the two numbers input to Canny are threshold values: a lower threshold and an upper threshold.

- All pixels with brightness below the lower threshold are set to zero: their edges are discarded

- All pixels with brightness above the upper threshold are set to 255

- Pixels with brightness between the two thresholds are set to 255 if they have an adjacent pixel that is 255, otherwise they are set to zero

One of the issues with Canny is figuring out the best set of threshold values for any given situation.

Add to your Canny video program: Let’s add a method to the Canny video program for the user to change the threshold values.

- Before the

whileloop, set up two variables:lowThreshanduppThresh, and initialize them to some reasonable initial values (maybe 100 and 200, for instance) - Pass

lowThreshanduppThreshtoCannyinstead of hard-coding numbers into the call - Add more cases to the

ifstatement that checks what key the user pressed fromwaitKey- If the user presses the w key, then add a small amount (between 1 and 5) to

lowThresh - If the user presses the s key, then subtract a small amount from

lowThresh(be sure not to go below 0 or aboe 255) - If the user presses the e key, then add a small amount to

uppThresh - If the user presses the d key, then subtract a small amount from

lowThresh

- If the user presses the w key, then add a small amount (between 1 and 5) to

Look at how different threshold values change what Canny shows you. Try holding up books or other items with writing on them. Hold up a ball to see whether you can get a clean edge around the ball.

Finding Contours

Using findContours on threshold images

In an earlier activity, you tried the threshold function and the inRange function to isolate coins and balls in still images (and in video as well).

Examine the program called findPink in contourPractice.py. This program is designed to find the bright pink lacrosse ball from my gray bag of computer vision supplies. The program relies on two tuples, pinkLow and pinkHigh, which define the color range for the pink ball.

Try this program, borrowing my pink ball, or choosing an object of your own.

To determine color ranges for yourself:

- Take a picture of the object with the webcam on your computer (Photobooth for Mac, not sure for Windows)

- Use a color picker tool to read the RGB values from various spots on the ball (Digital Color Meter on Mac)

- Use an online converter to translate those into HSV values

- To get OpenCV’s values, divide the Hue value by 2, and scale the other two to the range from 0 to 255, instead of 0 to 100

Isolating the ball contour

Using a combination of the contour’s area and its shape, pick one contour that seems most likely to be the ball. You can eliminate any contours that have very small area, and then compare the area of each of the remaining contours to the area of the minimum enclosing circle. Or you could see which contour has the most points that lie on the boundary of the minimum enclosing circle (a bit more math to doing that).

Do these calculations in the thresholdPink function, and only draw the most likely ball contour on the original image.

Trying examples on your own

Go back to the programs I provided, or the ones you wrote, that used thresholding to isolate balls or coins in images. Try applying findContours to the best results you got from thresholding (possibly incorporating morphing or blurring). Can you determine where the balls or coins are in the images, using size or shape?

Optional Challenge

Try using thresholding and findContours to find an outline around your hand held up in front of the camera. You might need an external camera for this, because you might need to use as simple and blank a background as possible (like the whiteboard, or a blank wall).

From the size or shape of the contour, can you determine whether your hand is in a fist or a flat palm, or holding up one or two fingers?

Simple motion detection

Simple motion detection just computes the difference between two adjacent frames in the video. Our motion detector then adds the differences found in the blue, green, and red channels, to ensure we don’t miss anything.

Try out the simpleMotion function in motionPractice.py. There are three sample calls, try some other videos as well.

Try this: Use threshold to convert diff2 into a black and white image, and then apply it as a mask to the original frame, and display the result. How well does it work? Optionally, try using opening or closing (morphological filters) to smooth and clean up the mask before applying it.

Background subtraction

The readings talked about three different background subtraction methods, but here we will focus on the MOG2 and KNN methods that OpenCV implements.

In motionPractice.py, there is a function called backgroundSubtract, which takes in a video source and a number for which model you want to run: 0 for MOG2 and 1 for KNN. Try out this program on various video feeds, and see how the MOG2 and KNN compare. When do they work well, and when do they struggle?

Try this: Pick one of these options for further exploration:

- Look at the documentation for the

createBackgroundSubtractorfunctions. They each have optional inputs, includinghistory, as well as thresholds for each algorithm. Try varying the history and/or the threshold values… how do those affect the behavior of the program? - Use

findContoursto identify moving objects from the mask image, can you tune it to find interesting moving objects and not noise? - Background subtraction is one step in a process called background substitution, where we substitute a different background for the black parts of the mask. Implement this:

- Pick an image

- Resize it to match the video frame size,

- Define a second mask to be the opposite of the one from the background subtraction (0 where it has 255, and vice versa)

- Use the second mask to select just the background pixels you need

- Add together the masked foreground and the masked background

- Display the result

- (Note that this is the same general process as question 1 on Homework 3)

Color-tracking with CamShift

Be sure to look through the readings and other materials about the CamShift algorithm. It is a clever, real-time color tracking algorithm. It is one of the first we’ve seen that actually maintains information across frames of a video (which is what “tracking” means, as opposed to “detecting”).

The camshift function in your colorTracking.py file takes in a reference image and then runs the Camshift algorithm to track the object and display what it has found. Try it out: the default reference image is for one of the bright blue floor hockey balls in my collection.

Read through the code and make sense of what it is doing.

Try this: Create a reference image for a different colored object, and try tracking that instead.